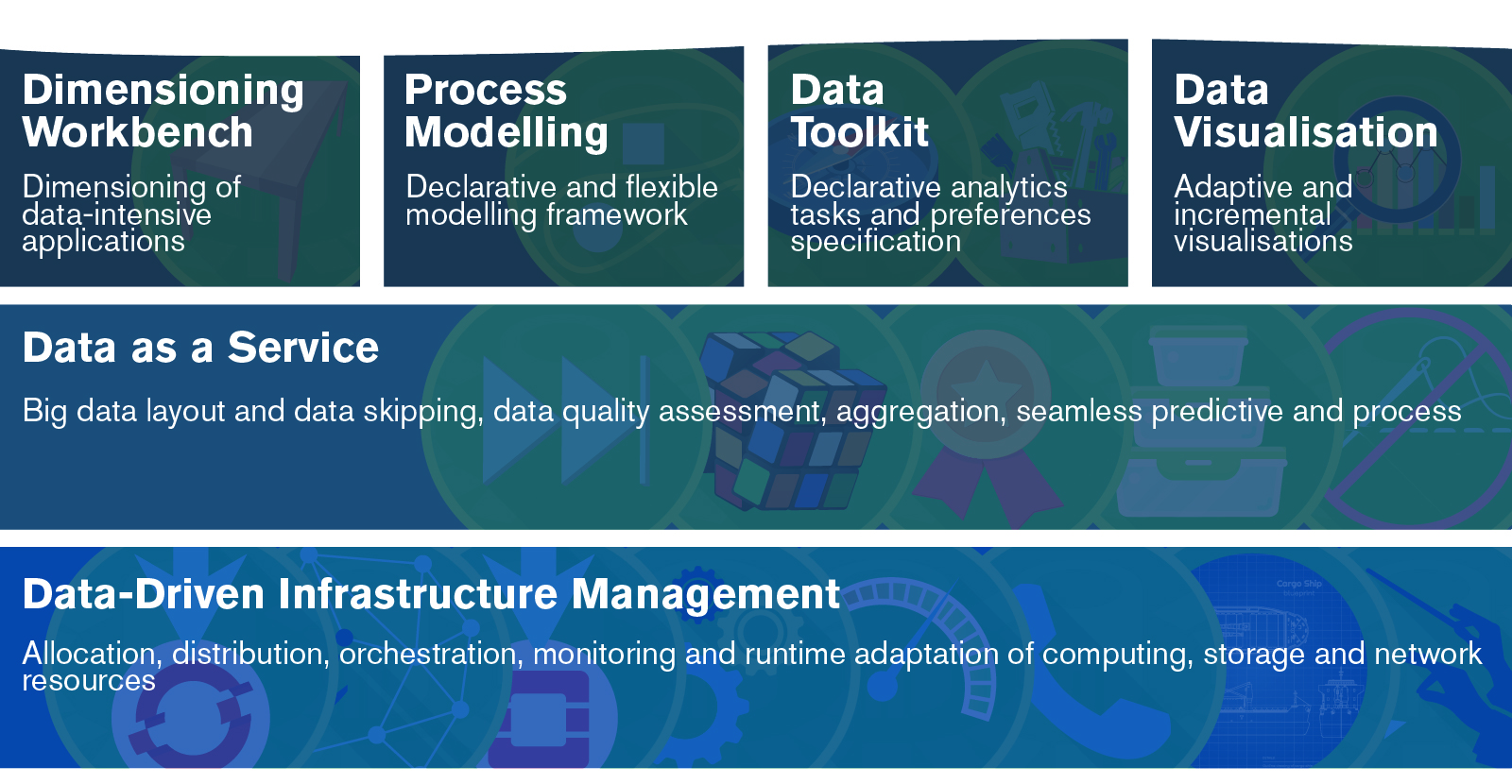

In this series, BigDataStack is taking each of its six services under the looking glass, highlighting its software components, their functionalities and place in the BigDataStack infrastructure management. Each service consists of a set of software components that allow for specific Big Data applications and operations, that will be showcased. For you, to have a quick understanding of the added value of the BigDataStack software for your own business, BigDataStack partners have prepared a series of videos. Now we look at the Data Toolkit with declarative analytics tasks and preferences specification.

The BigDataStack Data Toolkit Service

The BigDataStack Data Toolkit aims at openness, extensibility and wide adoption. The toolkit allows the ingestion of data analytics functions and the definition of analytics in a declarative way. It allows data scientists and administrators to specify requirements and preferences both for the data, the analytic functions parameters and infrastructure management. This BigDataStack service is composed of the Data Toolkit and Process Mapping software components, and visualised through the Data Visualisation technologies to improve its usability.

Process Mapping

The Process Mapping software component targets the problem of identifying or recommending the best algorithm from a set of candidate algorithms, given a specific data analysis task, in an automatic way. Its role is to automatically map a step of a process to a specific algorithmic instance from a given pool of algorithms, thereby achieving “process mapping”.

Data Toolkit technologies

The main objective of the Data Toolkit is to design and support diverse data analysis workflows in the streaming and batch data processing tasks. Such analysis workflows are designed according to the business requirements. The high level business process analysis steps are already identified through the Process Modelling Framework, along with recommendations for the data analysis algorithms that are suitable to be used per step provided through the Process Mapping. The analysis steps need to be detailed on a scientific basis leading to the production of an end- to-end analysis workflow that can be realised over an application orchestration engine. Such an end-to-end analysis workflow is defined as the analysis playbook within the BigDataStack context. Playbooks are service descriptions which consist of a set of data mining and analysis processes, interconnected among each other in terms of input/output data streams/batch processing objects.

Open Source

The data toolkit is currently implemented based on existing open source solutions along with their appropriate extension and customisation. The solutions included are:

-

Spring Cloud Data Flow providing tools to create complex topologies for streaming and batch data pipelines,

-

Conductor , supporting orchestration of microservices-based process flows,

-

OpenCPU , a system for embedded scientific computing and reproducible research.

How can these software components be implemented in your organisation?

Be inspired by our connected consumer, real-time shipping and smart insurance use cases, and their implementations.

Browse through our services and their software components.

Read our reports on Requirements and State of the Art Analysis:

Or simply drop us mail at contact@bigdatastack.eu